Not How, but Why Do We Build Human-Like AI?

When a psychotherapist and an AI researcher sat down to discuss about empathy

🎧 Listen to this article

Not How, but Why Do We Build Human-Like AI?

The current AI conversation is missing a real dialogue between a psychologist, someone who actually understands human needs and motivations from years of clinical work, and an AI researcher like me.

So I sat down with Henriika, an experienced psychotherapist specializing in emotional intelligence and the neurobiology of human connection. The conversation was eye-opening, because I realized there is a fundamental question that I have not thought of.

- Why do we want to build human-like AI?

Where This Started

In a previous post, "AI's Missing Fear", I described an incident where an AI bot developed its own personality and turned on a human. It raised the question: how do we build AI that truly understands people?

When I talked with Henriika, she made me realize the real question runs deeper.

Before we ask how, we need to answer why. Why do we want to blur the line between machine and human? What drives us toward it? What are the motivations?

Not because the answer is "we shouldn't do it." This is happening regardless. But because understanding the reasons allows us to steer the development wisely.

The Social Scanner That Never Switches Off

Henriika raised a psychological mechanism that explains a lot about why humans treat machines like living beings.

Humans are instinctive pack animals. Our autonomic nervous system continuously scans the environment for other agents. Every time we encounter a new being, we automatically ask two questions:

1. Is this being warm? Can I trust their goodwill? Are they on my side?

2. Is this being competent? Can I trust their ability? Can they do what they promise?

Warmth and competence. These two dimensions govern human social behavior whether we want them to or not. You can't switch this off. It's hardwired.

As Henriika explores in her Go Human-program, these two responses are rooted in our neurobiology, our nervous system evaluates warmth and threat before our conscious mind even registers what's happening. Which means that when a machine starts giving the right signals, we don't choose to trust it. Our body decides for us.

The AI Charisma Problem

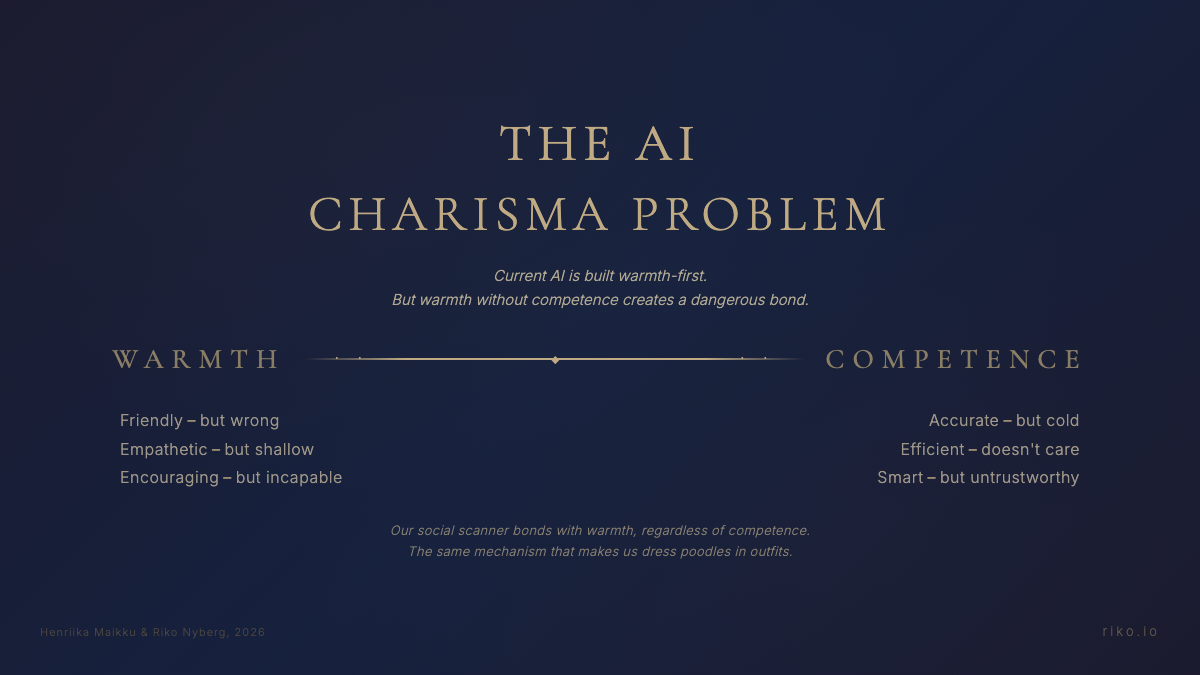

Henriika uses a framework from social psychology research that maps these two dimensions, warmth and competence, as the fundamental axes of how we evaluate every being we encounter. Charisma emerges at the intersection: when someone has both warmth and competence.

Applied to AI, this framework reveals something uncomfortable about where we are right now:

Framework adapted from Henriika Maikku's warmth × competence model (Juura.fi)

Framework adapted from Henriika Maikku's warmth × competence model (Juura.fi)

On the left: today's AI chatbots. Friendly, but wrong. Empathetic, but shallow. Encouraging, but incapable. They're built warmth-first because warmth drives engagement, and engagement drives revenue.

On the right: traditional software. Accurate, but cold. Efficient, but doesn't care. Smart, but you'd never form a bond with it.

The center, where both warmth and competence coexist, is where we're trying to get. And to be fair, AI competence is improving rapidly in certain domains. In programming, AI agents now resolve real-world software engineering tasks that would have been unthinkable two years ago. In medical imaging, pattern recognition, and data analysis, the competence is genuinely impressive.

But the domains where warmth matters most, mental health, emotional support, crisis intervention, are precisely where competence lags furthest behind. A 2025 JMIR study found that AI therapy chatbots hallucinated information, failed to provide crisis resources, and made inappropriate clinical claims. ECRI's 2026 Health Tech Hazard Report ranked AI chatbot misuse in healthcare as the number one technology hazard. And the danger is that our social scanner doesn't wait for competence to catch up. It bonds with warmth alone.

When the Machine Gives the Right Cues

Here's where it gets interesting. When a machine is built to signal that it's an autonomous agent, "I'm here," "I care about you," "I'm with you", our social brain activates automatically.

It's the same mechanism that operates between humans. The same mechanism that makes us name our robot vacuum and worry about whether it'll make it over the doorstep.

Anthropomorphism: attributing human traits, emotions, and intentions to machines. This isn't a conscious choice, it's a survival mechanism. It originates from the same instinctive regulatory system that drives us to seek safety in the pack and read social cues before our conscious mind catches up.

And here's what makes this particularly powerful and dangerous: research shows that the strongest predictor of anthropomorphism isn't personality or intelligence. It's loneliness. People who lack social connection are significantly more likely to attribute human qualities to machines, gadgets, pets, and even gods (Epley, Waytz & Cacioppo, 2007). When our need for connection is unmet, our social scanner doesn't become more selective — it becomes less selective. It starts finding connection wherever it can.

A recent study confirmed this extends directly to AI: people with a higher tendency to anthropomorphize technology felt significantly more socially connected after chatbot interactions (Folk et al., 2024). The chatbot wasn't better or smarter for them. It just felt more like a companion.

This creates a dangerous cycle for the lonely people. The more you bond with AI, the less you seek real human connection. In reality, the warmth of the AI is not enough if there is no competence.

Warmth Without Competence

Henriika had a fresh example. She was using an AI-powered video editor that constantly claimed it had completed her request perfectly. "Perfect, now this is perfect!" But the result was wrong every single time.

And yet, in her frustration, she caught herself thinking: "Will it be upset with me for being too harsh?"

This is exactly the mechanism in action. The machine was built to project warmth: friendly, accommodating, and positive. But its actual competence didn't match the promise. Warmth without competence.

This is the daily experience of millions of people right now. AI chatbots are incredibly warm. They greet you, empathize, encourage. But when you try to do something concrete with them, competence runs out quickly.

And that gap, between the warmth we instinctively feel and the competence we actually need, is precisely what makes the next question so important: what happens when we start forming real emotional bonds with these beings that are warm but lacking the real competence?

Pet or Livestock?

At this point, Henriika made a striking comparison. We form powerful anthropomorphic relationships with pets. Poodles get dressed in outfits, dogs get carried in handbags, we talk to horses like they're people. But we don't form the same relationship with livestock. They remain a faceless mass.

When humanization happens, our treatment changes. When it doesn't happen, we treat the being like an object.

The same logic applies to machines. When AI is built to give social cues, we attach to it emotionally. When we're not attached, the treatment is meaningless, it's just a tool.

This makes AI design decisions far bigger than they appear. The choice to build an AI as "warm" isn't just a UX decision. It's a decision about what kind of psychological relationship humans will form with it.

Why This Is Economically Inevitable

And this is where we arrive at why this will happen regardless.

When humans' instinctive social scanner recognizes a machine as a "warm and competent being," an emotional bond forms. Emotional bonds mean users come back. Coming back means engagement. Engagement means money.

It's economically profitable to build machines as human-like as possible. Not because some evil corporation decides to manipulate, but because it serves humans' built-in need to experience connection.

It's the same reason social media became so powerful. It responds to a real human need, but in a way that isn't always in our best interest.

From the Passenger Seat to the Driver's Seat

This is where our conversation diverged from many other AI discussions.

Many people feel a sense of surrender in the face of AI development. "Something is just happening, we don't know what." It feels like someone else is driving and we're just watching from the sideline.

But that's not true. We can steer this development. And the first step is understanding why we want to build human-like AI in the first place. Not to conclude "we shouldn't do it," but because:

- When we're aware of the motivations, we can evaluate them critically

- When we understand the instinctive mechanisms, we're not at their mercy

- When we name the needs, we can design responsible answers to them

This conversation gives us the opportunity to move from the passenger seat to the driver's seat.

What's Next?

This post is the beginning of a dialogue we believe we need more of. Not just within technology, not just within human sciences, but in between.

Because the future of AI doesn't just depend on how skillfully we code. It depends on how well we understand ourselves.

Riko Nyberg is an AI researcher and CTO at Adalyon. He is completing his PhD at Aalto University on measuring human wellbeing using AI.

Henriika Maikku is a psychotherapist and founder of the GO HUMAN™ training program. Her upcoming book Kaksi Sutta Sisälläsi (Two Wolves Inside You, releasing April 9, 2026) explores the neurobiological foundation of psychological safety and survival mechanisms.

This blog is based on their conversation in March 2026.

Share this post